(graphic from Youth Endowment Fund 2024)

The Home Office and the Department for Education have announcement measures claiming that “the next generation of girls will be better protected from violence and young boys steered away from harmful misogynistic influences”. We are supposed to rejoice that the government will be “tackling” the misogyny in the classroom that we are told is endemic.

The measures will include a helpline for girls to report abuse and “behavioural courses” for boys – compulsory re-education in the best communist fashion. The Prime Minister stated “Every parent should be able to trust that their daughter is safe at school…This is about protecting girls…”

Social media and online “influencers” are blamed for “radicalising young men” and a link is claimed between such “radicalisation” of schoolboys and violence against women and girls which, the government tell us, is a “national emergency”. The domestic abuse commissioner for England and Wales, Dame Nicole Jacobs, said the measures did “not go far enough”.

But I have a difficulty with all this. Whilst everyone is familiar with the word “misogyny” fewer are familiar with “misandry”, the equivalent when the target of abuse or discrimination is male. And yet the two things are of broadly equal prevalence, and sometimes men or boys are the majority of victims.

A 2022 report from Ofcom on exposure to harmful material online concluded, “Overall, men are more likely than women to have experienced potentially harmful online behaviour or content in the last four weeks (64% vs 60%)”. And yet the title of that report was “Ofcom urges tech firms to keep women safer online”. One wonders why the other half of society warranted no mention despite leading the findings. A similar result was reported in Ofcom’s 2024 One Nation report which again indicated that men were more likely than women to have experienced potentially harmful online behaviour or content. For teenagers that was reversed, but the percentages remain closely comparable (66% and 73% respectively).

This is hardly new. A survey by Pew Research in 2014 found that “Overall, men are somewhat more likely than women to experience at least one of the elements of online harassment, 44% vs. 37%”.

Earlier this year, computer scientist and data analyst Erica Coppolillo published an article in Nature’s Scientific Reports which analysed gendered hate speech (her phrase) on four openly declared misogynistic or misandric Reddit communities. Her conclusion: “The performed analyses reveal that no systematic differences can be devised across the misogynistic and misandric communities”.

A similar disconnect between the popular narrative exists in domestic abuse. According to the latest ONS data, 41.7% of adult domestic abuse victims are men and just over half of current victims of partner abuse are men. Apparently this is not a national emergency.

The comparable victimisation of the two sexes in these matters has a long history – a history notable principally for being kept from public attention.

But what about bad behaviour by school children? Is this also comparable by boys and girls? It would seem so…except when girls are worse, that is. According to surveys by the Youth Endowment Fund (2025) there is little difference between the percentages of boys and girls aged 13-17 who have participated in discussions about harming specific groups (38% cf 34%).

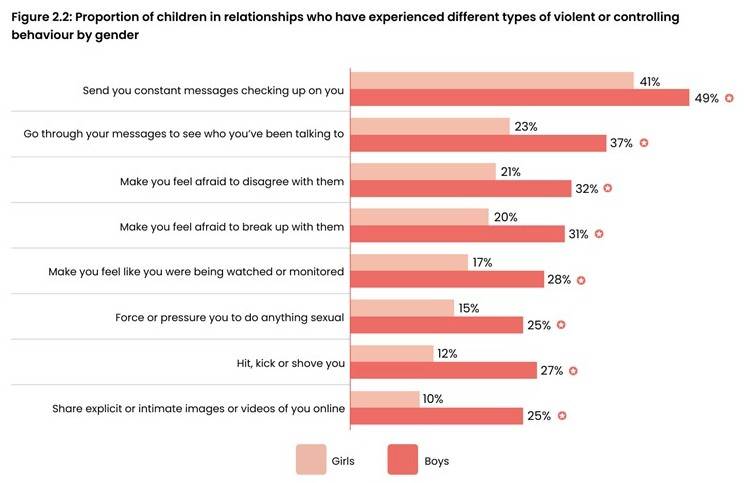

The Youth Endowment Fund’s 2024 report surveyed over 10,000 children aged 13-17 in England & Wales. It should be common knowledge that boys are more likely to be victims of violence than girls and the findings of the report again confirm that. Of greater interest are the findings on abusive behaviours in relationships. The report summarises this as “Contrary to common perceptions, boys report experiencing higher rates of violent and controlling behaviours from their partners compared to girls”. The breakdown into specific behaviours is shown in the graphic which heads this post. In words the relative victimisation was found to be,

- At least one violent or controlling behaviour from their partner: 57% of boys cf 41% of girls. (This is equivalent to 16% and 11% of all 13-17-year-old boys and girls respectively).

- A partner has sent constant messages checking up on you: 49% of boys cf 41% of girls;

- A partner has gone through their phone or social media to see who they’ve been talking to: 37% of boys cf 23% of girls;

- Made to feel afraid to disagree with their partner: 32% of boys cf 21% of girls;

- Made to feel afraid to break up with their partner: 31% of boys cf 20% of girls;

- Made to feel you were being watched or monitored: 28% of boys cf 17% of girls;

- Forced or pressured you to do something sexual: 25% of boys cf 15% of girls;

- Hit, kicked or shoved you: 27% of boys cf 12% of girls.

- Shared explicit or intimate images or videos of you online: 25% of boys cf 10% of girls.

In short, girls are more controlling, more violent and more sexually predatory in their relationships than boys. For any old traditional conservatives who insist that all girls are sugar and spice and all things nice please be aware that that was always partly fantasy and, perhaps more especially, that girls are not what they were 50 years ago.

This may be contrary to popular perceptions, but only because empirical reality has been systematically kept from public view. It is actually unsurprising. One sex, boys, have already had it drummed into them for decades that these behaviours are reprehensible and will be punished. The other sex, girls, has not. And where bad behaviours go unpunished, and even hidden, of course they will increase. This is the actual background which a profoundly stupid and partisan government is determined to exacerbate.

And recall that boys in school know very well what the reality is without the need for a survey. And this is the backcloth against which they are the ones, exclusively, to receive a bashing (again).

I am thankful that I do not have school-age sons. If I had school-age daughters now I would be confident they would thrive at school, do well academically and not be subject to endemic discrimination based on their sex. For boys I would be fearful about all those things.

Why is it that, fifty years ago, it was axiomatic that girls’ underachievement at school arose from systemic prejudice, whereas now that boys have been underachieving for decades it is equally axiomatic that it is the boys’ own fault – for being boys.

The answer, I believe, lies in the motivation for this latest round of boy bashing. Politicians are ever keen to appear to occupy the moral high ground and the protection of women and girls is always a winner. In contrast, a balanced perspective based on the empirical reality just doesn’t carry the same virtue-signalling brownie points. Indeed, an even-handed policy risks the accusation of misogyny being turned against those same politicians, thus guaranteeing they will never go there. In contrast, there’s never any downside to bashing boys.

Boys themselves, of course, will be very aware of the reality; as will girls. One does not need to be an expert psychologist to anticipate that boys subject to this draconian suppression will harbour resentment. It is inevitable, and well deserved.

One wonders whether any of the ministers with longstanding partisan positions are capable of acknowledging that it is not online influencers that have driven a gulf of understanding between the sexes, but they themselves. One wonders if they realise that they are going the right way to create the monster of their own imaginings.

And what is the reality of boys’ experience at school now? A survey of sixth form pupils by Civitas revealed that 41 per cent of the pupils reported they had been taught that young men are a problem for society. Why would anyone expect young men to be positively disposed towards a society which openly despises them?

We have surely reached the point where some parents will be asking themselves whether they want to send their sons to a mixed-sex school. The curious thing about calls for single-sex education after age 11 is that it never seems to come from the lobby that expresses such concern over the safety of girls. Could it be that the last thing they want is for boys to escape their clutches?